Artificial intelligence is rapidly becoming part of everyday software development.

Product teams integrate language models into features.

Data teams experiment with new training pipelines.

Operations teams explore AI assistants to automate workflows.

At first, these experiments feel small.

A few model calls here.

Some prompt testing there.

Maybe a prototype using embeddings or vector search.

Individually, none of these changes seem expensive. But when AI experiments begin spreading across teams and projects, organizations often discover a new challenge.

AI spending behaves very differently from traditional cloud infrastructure.

This is why the FinOps Foundation introduced FinOps for AI. The concept helps organizations manage AI cost complexity, align spending with business value, and maintain governance without slowing innovation.

As AI adoption grows, the real challenge is not just managing costs. It is understanding where those costs originate and how they evolve.

Why AI Spending Is Harder to Control

Traditional cloud infrastructure usually follows predictable patterns. Teams deploy applications, scale infrastructure gradually, and monitor usage through well-established architecture models.

AI workloads introduce a completely different environment.

Organizations must now track costs across models, tokens, embeddings, inference requests, and training cycles. These signals are more granular than traditional infrastructure metrics, and they change rapidly depending on how AI services are used.

A single feature powered by an AI model may generate millions of tokens per day. If usage grows unexpectedly, the cost impact can escalate quickly without a visible infrastructure change.

This creates a new visibility challenge for engineering, finance, and operations teams.

Without real-time insight into how AI workloads behave, organizations often discover the financial impact long after the decisions that caused it were made.

Experimentation Drives Unpredictable Usage

AI development thrives on experimentation.

Teams constantly test new prompts, switch between models, or adjust pipelines to improve results. This rapid experimentation means workloads rarely stay stable for long.

One week a model may run occasionally in a prototype environment.

The next week it might power a production feature used by thousands of customers.

Because experimentation happens frequently, spending patterns can change faster than traditional forecasting models expect.

FinOps teams must adapt by using shorter forecasting cycles and by continuously reviewing consumption patterns across AI workloads.

Without this adaptive approach, financial planning becomes disconnected from how AI systems actually behave in production.

More People Are Building AI Applications

Another factor complicating AI cost management is the expanding group of people creating AI solutions.

In traditional cloud environments, infrastructure spending was mostly driven by engineering teams. With AI tools becoming easier to access, the situation is changing.

Product managers integrate AI capabilities into applications.

Marketing teams experiment with AI automation tools.

Operations teams deploy internal assistants powered by language models.

Many of these users are not infrastructure specialists. They may not understand how AI requests translate into compute costs or token consumption.

As a result, FinOps teams must work with a broader set of stakeholders than ever before. Education and enablement become essential so teams understand how their AI usage affects organizational spending.

A Rapidly Expanding AI Vendor Ecosystem

AI adoption also introduces a complex ecosystem of tools and vendors.

Organizations may rely on multiple hyperscale cloud providers while simultaneously using specialized AI platforms, SaaS AI services, or new AI-native infrastructure providers.

Each of these vendors introduces different pricing models and usage metrics.

An AI workflow may involve:

Cloud infrastructure for orchestration

Language models accessed through APIs

Vector databases for embeddings

Data pipelines for training and evaluation

Tracking cost behavior across these layers becomes extremely difficult without unified visibility.

This complexity is one reason why FinOps for AI emphasizes stronger allocation models, better forecasting approaches, and deeper governance capabilities.

Governance Must Evolve Without Slowing Innovation

AI adoption is often driven by a desire to move quickly. Organizations want teams to experiment, discover new use cases, and build competitive capabilities.

However, innovation without visibility creates risk.

When AI usage grows faster than governance, organizations may face unexpected cost spikes, fragmented spending across vendors, and difficulty understanding which projects create real business value.

FinOps for AI does not aim to restrict innovation.

Instead, it encourages organizations to build governance frameworks that support experimentation while maintaining accountability. Policies, allocation models, and cost monitoring must evolve to reflect how AI systems actually operate.

Visibility Is the Foundation of AI FinOps

Across all these challenges, one principle becomes clear.

Managing AI costs begins with visibility.

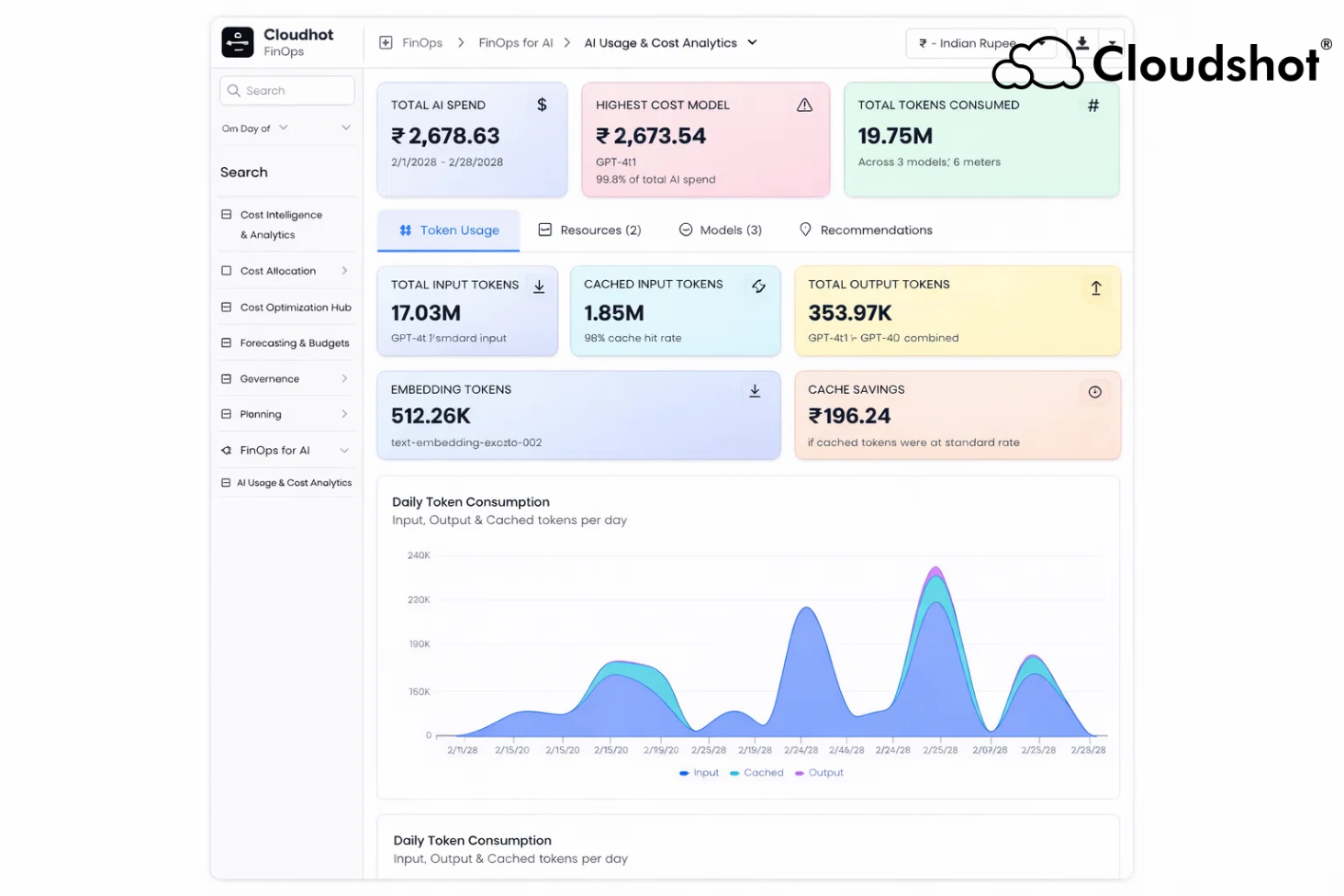

Organizations must understand:

Which models generate the most consumption

How token usage changes over time

Which teams or products drive spending

Where optimization opportunities exist

Without that visibility, FinOps teams operate reactively. They analyze invoices after costs appear rather than guiding decisions while workloads are running.

Real-time insight into AI infrastructure behavior allows organizations to shift from reactive reporting to proactive cost management.

For a deeper look at how visibility challenges impact cloud environments, explore Cloudshot's perspective on fragmented cloud visibility costs: https://cloudshot.io/blogs/fragmented-cloud-visibility-cost/?r=ofp

Bringing AI Usage and Cloud Infrastructure Into One View

This is where modern cloud visibility platforms become essential.

Tools like Cloudshot help teams connect infrastructure behavior, AI workload activity, and financial signals in a single view.

Instead of analyzing disconnected dashboards and reports, teams can observe how architecture decisions influence spending patterns across multi-cloud environments.

Cloudshot provides capabilities such as:

Real-time infrastructure visibility across AWS, Azure, and GCP

Monitoring of workload behavior and usage spikes

Early detection of anomalies that may affect performance or cost

Improved collaboration between engineering, security, and finance teams

This level of visibility allows organizations to identify problems before they evolve into financial surprises.

You can explore the broader platform capabilities here: https://cloudshot.io/?r=ofp

The Future of FinOps in an AI-Driven Cloud

Artificial intelligence will continue transforming how organizations build software, automate processes, and analyze data.

But as AI systems grow more complex, so will the challenges of managing their cost and usage.

FinOps for AI provides a framework to address that complexity. By adapting traditional FinOps principles to AI workloads, organizations can maintain financial discipline while continuing to innovate.

The companies that succeed in this environment will not simply build AI faster. They will understand the behavior of their AI systems, monitor their infrastructure in real time, and ensure every experiment aligns with business value.

Before the next AI experiment quietly rewrites your cloud spending.