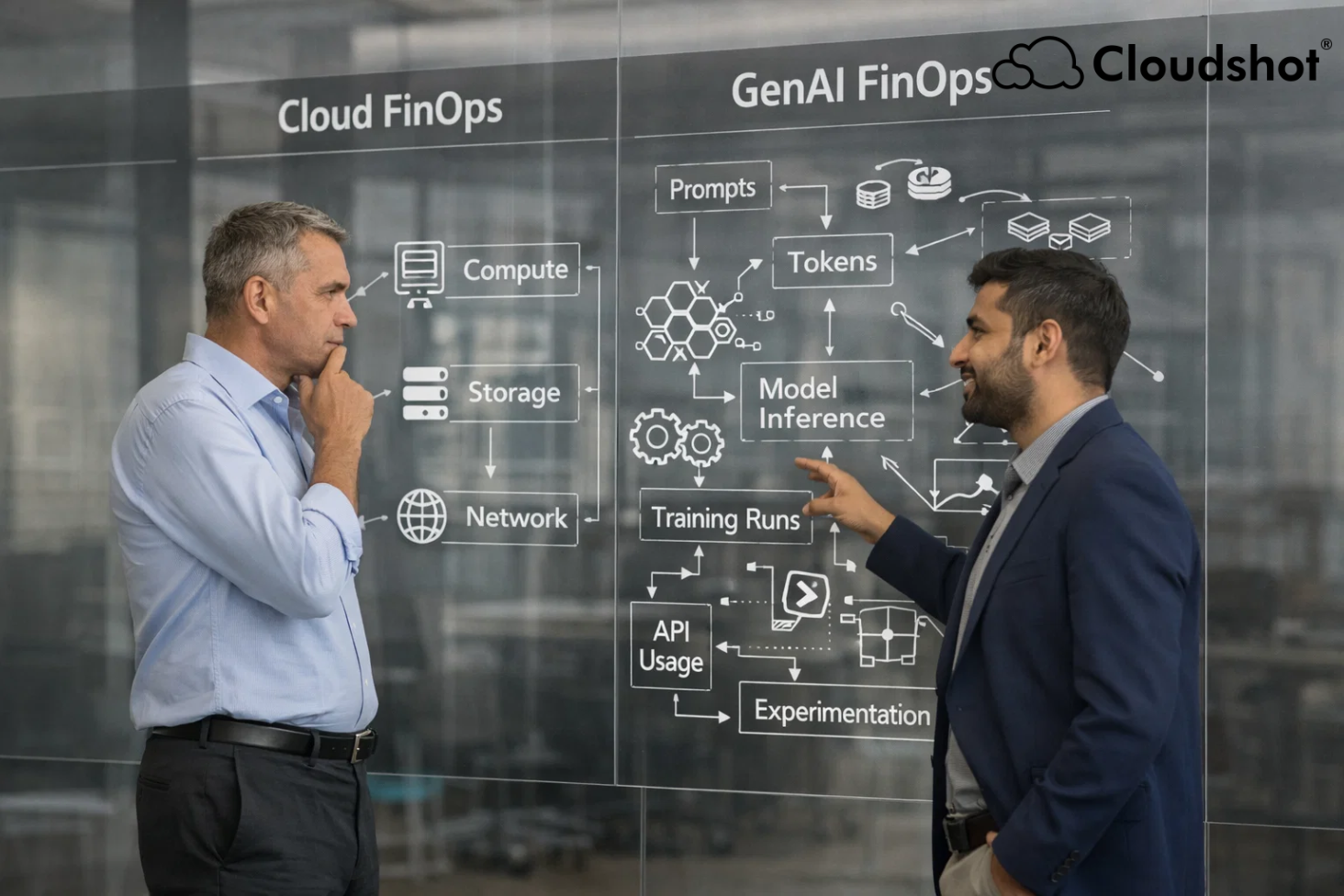

Cloud FinOps helped organizations control spending when workloads moved to the cloud.

Teams learned how to manage compute, storage, and networking costs by observing infrastructure usage patterns and optimizing resources.

Generative AI is introducing a different financial dynamic.

AI workloads behave differently from traditional cloud systems. Their costs are driven by tokens, model requests, and experimentation cycles rather than just infrastructure consumption.

Because of this shift, the FinOps community is now distinguishing between Cloud FinOps and GenAI FinOps.

Different Cost Drivers

Traditional cloud costs are tied to infrastructure resources. Organizations pay for servers, storage capacity, networking traffic, and managed services.

Generative AI workloads introduce new cost signals such as token consumption, inference requests, and model usage.

Even a small change in prompts or model configuration can increase token usage significantly.

As AI features scale across applications, those tokens accumulate quickly and impact budgets.

This makes AI spending harder to track using traditional infrastructure metrics.

Experimentation Creates Unpredictable Spending

Cloud workloads usually follow predictable scaling patterns based on user traffic.

Generative AI development operates very differently.

Teams constantly test prompts, compare models, and refine pipelines to improve output quality.

These experiments can change usage patterns daily.

A prototype feature can suddenly scale once it becomes part of a product workflow.

Forecasting therefore becomes more complex, requiring more frequent cost monitoring and adjustments.

More Teams Influence AI Spending

Cloud infrastructure spending was historically driven by engineering teams.

AI tools are now accessible across organizations.

Product managers, analysts, and operations teams can integrate AI services into workflows without deep infrastructure knowledge.

This expands the number of people generating cloud consumption.

FinOps teams must now collaborate with a broader set of stakeholders and educate them about how AI usage affects cost behavior.

A Growing AI Vendor Ecosystem

Traditional cloud environments typically rely on a small number of hyperscale providers.

Generative AI introduces a much wider ecosystem.

Organizations may combine cloud providers, model vendors, SaaS AI platforms, and specialized AI infrastructure.

A single AI feature may involve several services working together.

Tracking how those services contribute to cost requires stronger visibility into how workloads interact across environments.

For example, the challenges of fragmented visibility in cloud environments are discussed in this Cloudshot article: https://cloudshot.io/blogs/fragmented-cloud-visibility-cost/?r=ofp

Visibility Is the Key to Managing AI Costs

Managing AI costs requires more than traditional infrastructure monitoring.

Organizations must understand which models generate usage, how token consumption evolves, and which applications drive spending.

Without that visibility, FinOps teams can only analyze costs after the invoice appears.

Platforms like Cloudshot help teams observe infrastructure and workload behavior in real time. This allows organizations to detect usage spikes and understand system behavior before costs escalate.

Learn more about the platform here: https://cloudshot.io/?r=ofp

The Future of FinOps for AI

Generative AI is transforming how organizations build products and automate processes.

But it also introduces new complexity in cost management.

GenAI FinOps is emerging as a practice designed to address this shift. By adapting FinOps principles to AI workloads, organizations can maintain financial discipline while continuing to innovate.

The teams that succeed with AI will not just experiment quickly.

They will build the visibility required to understand how AI systems consume resources.

Before the next AI experiment quietly rewrites your cloud bill.